This article about a US startup is quite interesting. They are using a new approach to greatly reduce the size of optical cameras in space, through a combination of novel electronics and

AI-based image reconstruction. Note that this is software-based AI. It will be useful in

satellites and rovers. If you follow the job link you'll see that they intend to eventually use this camera technology in the automotive, medical, robotics and industrial areas, which are also areas Brainchip have currently said they are working in.

If you follow the link to the AI consultant advertisement in the link at the bottom of the article, you'll see that they are looking for someone experienced with CNN algorithms, Python and Tensorflow. Since they are wanting to run AI applications in space, a hardened Akida chip would be the best choice as it is low SWaP, runs CNN's and is just becoming available for commercial opportunities. Additionally, NASA has sole-sourced Akida chips so they are likely to become the standard going forward.

Pure speculation, DYOR

https://www.wnf.washington.edu/small-business-awards-from-darpa-and-nasa-fuel-growth-of-uw-spinout-tunoptix/October 19, 2021

Small Business awards from DARPA and NASA fuel growth of UW spinout Tunoptix

Arka Majumdar (left) and Karl Böhringer (right)

Arka Majumdar (left) and Karl Böhringer (right)Tunoptix, a Seattle-based optics startup co-founded by University of Washington (UW) electrical and computer engineering professors Karl Böhringer and Arka Majumdar, was awarded a $1,500,000 Small Business Technology Transfer (STTR) Phase II grant from the Defense Advanced Research Projects Agency (DARPA). This highly competitive award provides funding for Tunoptix to continue developing next-generation imaging systems for use on satellites or aircrafts where weight, size and power are critical.

Lenses with large apertures, the opening through which light travels, are needed to capture images in low-light environments. The extremely large aperture lenses currently used by the defense and aerospace industries for surveillance and space exploration are generally very heavy and bulky. For example, the lens in the Hubble space telescope, which has an aperture of about 2 meters, is roughly 1,000 kilograms. Reducing the size, weight and power of such optical systems would significantly reduce their cost.

Meta-optics – engineered surfaces consisting of an array of nanoscale structures that can focus light – are emerging as a promising alternative to traditional lenses. Although meta-optics are lightweight and exceptionally thin (< 1mm), they cannot generate high quality color images. There are some solutions for full-color imaging, but all of them are generally limited to small apertures, about 100 – 200 micrometers in diameter. Tunoptix is developing large aperture (~1cm-10cm) metalenses combined with advanced AI-based image reconstruction software to capture high quality full-color images.

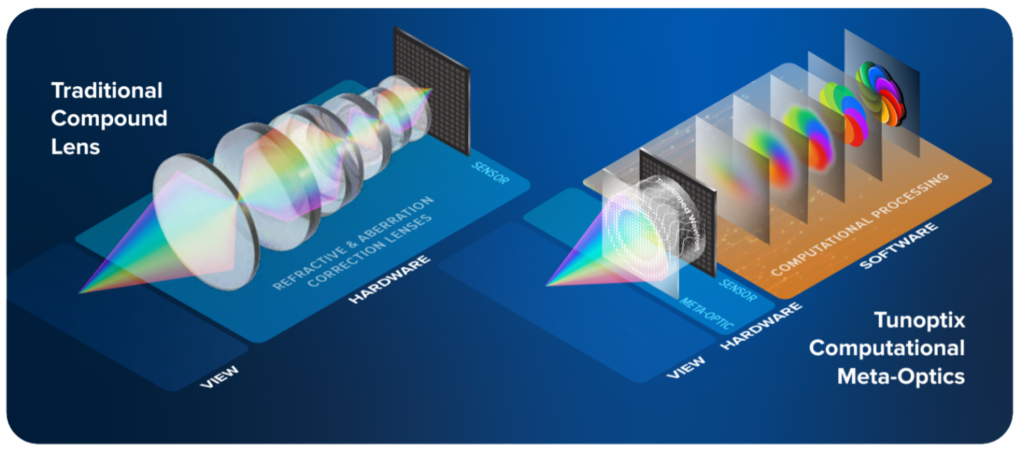

Comparison between a conventional compound refractive imaging system and a Tunoptix current-generation computational imaging system. The conventional imaging system requires multiple optics to correct chromatic and geometric aberrations, resulting in a heavy and bulky package. The Tunoptix computational meta-optic is a single-element, flat solution with a drastically reduced package weight and size that encodes information from the scene. The computational imaging software is jointly designed with the meta-optic and recovers the high-quality image or desired information from the data in the intermediate image encoded by the meta-optic. Image courtesy of Tunoptix.

Comparison between a conventional compound refractive imaging system and a Tunoptix current-generation computational imaging system. The conventional imaging system requires multiple optics to correct chromatic and geometric aberrations, resulting in a heavy and bulky package. The Tunoptix computational meta-optic is a single-element, flat solution with a drastically reduced package weight and size that encodes information from the scene. The computational imaging software is jointly designed with the meta-optic and recovers the high-quality image or desired information from the data in the intermediate image encoded by the meta-optic. Image courtesy of Tunoptix.Tunoptix was previously awarded a $223k DARPA STTR Phase I grant to establish the feasibility of scaling their metasurface designs up to 10 centimeters. This STTR Phase II award will allow the company to begin fabricating its large aperture metasurface designs at the UW Washington Nanofabrication Facility (WNF), an open-access user facility that provides academic researchers and industry professionals access to nanofabrication tools and expertise.

A portion of the Phase II work will be carried out by the laboratory of Felix Heide, a computer science professor at Princeton University. Heide’s computational imaging lab will evaluate the imaging capability of Tunoptix’s metasurfaces and help optimize the algorithms used for image reconstruction.

While the focus of Tunoptix’s work for DARPA has been imaging in the visible light range, their approach can work for any wavelength. Tunoptix recently received a NASA Small Business Innovation Research (SBIR) Phase I award to develop a compact hyperspectral imaging (HSI) system using their meta-optics technology. Compact HSI systems will be especially useful in carrying out satellite-based and rover-based imaging of planetary surfaces, airborne remote sensing of coastal and oceanic regions, and inspection of mission-critical satellite systems in space.

“We are focused on revolutionizing the way optical systems are conceptualized, designed and manufactured,” said Majumdar. “Using well-established, high precision, low-cost semiconductor manufacturing techniques we are creating new, simple optical elements that will be critical for the evolution of optical design in the digital age. Moreover, by incorporating computational algorithms on the backend, we can improve image quality leaps and bounds over what was achievable using just meta-optics.”

For those interested in advancing this technology, Tunoptix is hiring several positions including an optical test engineer, software developer and AI consultant.

AI consultant job:POSITION – AI Consultant, Computer Vision (Part-time Consultant)

Reporting to the Director of System Design, the AI Consultant will collaborate with a talented

team of engineers and scientists to advise and assist in the development of machine learning

software for use in cutting edge computational imaging systems. This includes algorithms for

various learned imaging tasks (e.g., OCR, image classification, detection, and segmentation). This

is an exciting opportunity for a highly motivated and capable engineer to help shape

next generation imaging systems for industrial machine vision, medical instrumentation, automobiles, robotics, and consumer electronics.RESPONSIBILITIES

• Work closely with the Company’s founders and technical team to advise and assist in the

development, training, and validation of software for specific learned imaging tasks (e.g.,

character recognition, 3D vision, image classification, detection, and segmentation)

• Advise the technical team on AI/ML/Deep learning research and advances relevant to the

Company’s goals

•

Provide analyses and trade studies of performance tradeoffs for different network

architectures in terms of power consumption, latency, accuracy, and memory usage• Consolidate and prepare reports detailing techniques and best practices for deploying the

developed software, network pruning techniques, and training strategies

QUALIFICATIONS

• PhD in Computer Science, Electrical Engineering, or equivalent technical discipline with a

focus on AI/ML/Deep Learning and Computer Vision

• 5+ years of experience in developing and training ML models for imaging tasks

•

Well-versed in state-of-the-art CNN architectures and ML literature

• Extensive experience developing image processing algorithms using classical and

convolutional neural network-based approaches

• Experience with network pruning techniques such as knowledge distillation

• Significant experience using

Python and TensorFlow• Understanding of information theory and signal/image processing fundamentals

• Familiarity with optics and imaging systems (e.g., lenses, diffractive optics, camera

sensors)

• Strong interpersonal and technical communication skills

• Ability to bridge technology development with commercial demands

• Familiar with the startup or early-stage business environment

• Entrepreneurial spirit, with a hands-on, roll-up-the-sleeves mentality

(20min delay)

(20min delay)